Satyapalsinh Gohil

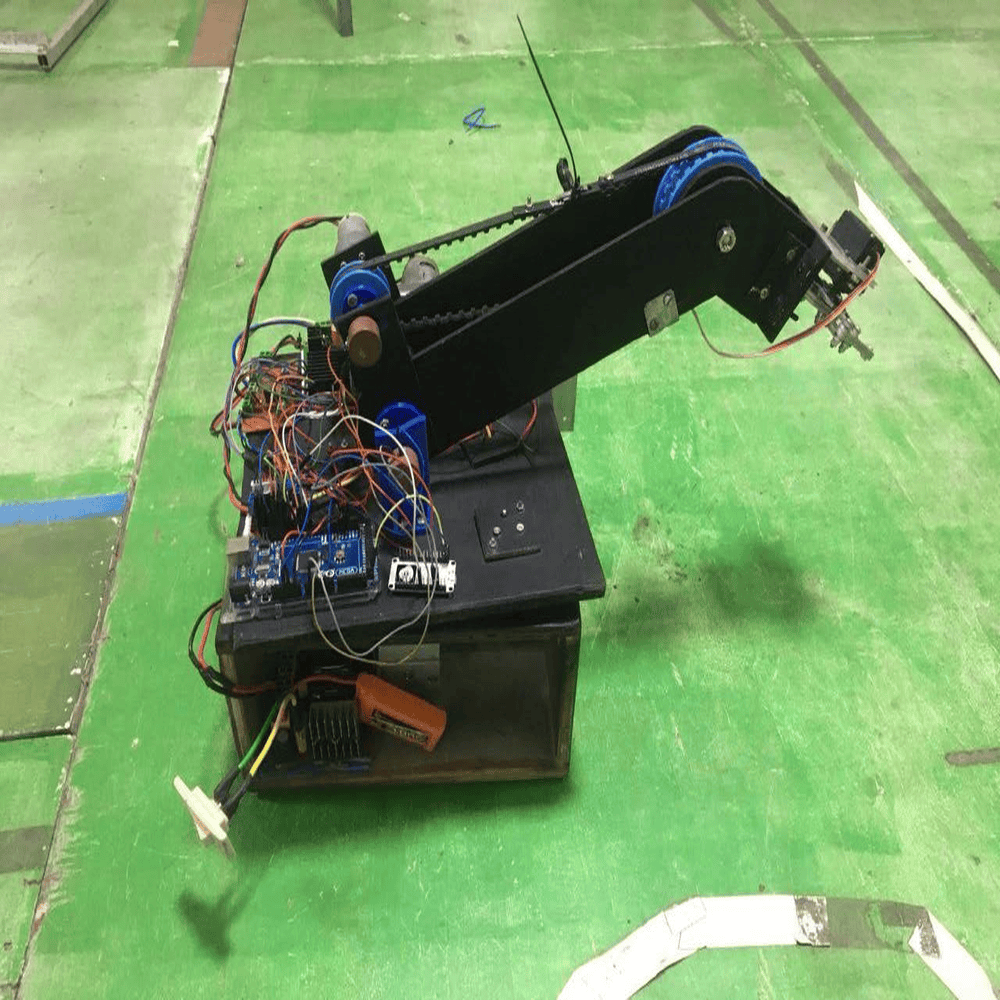

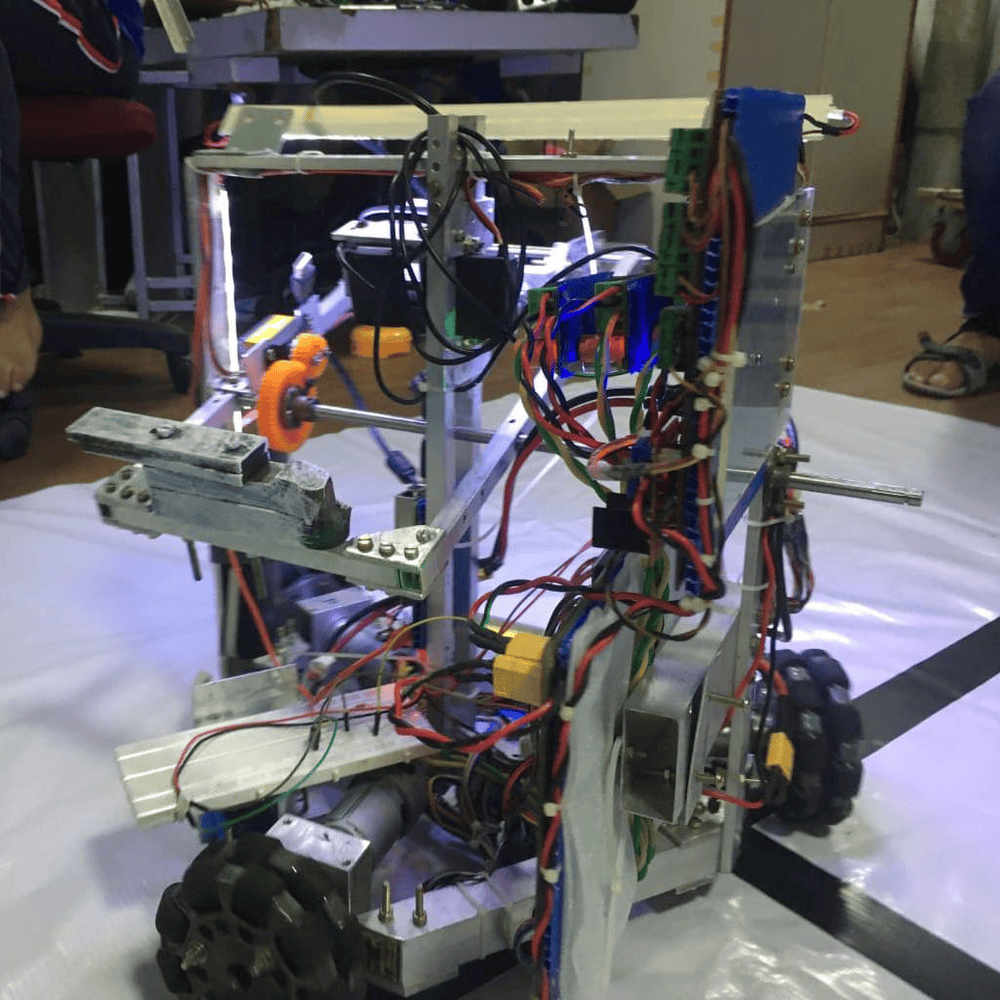

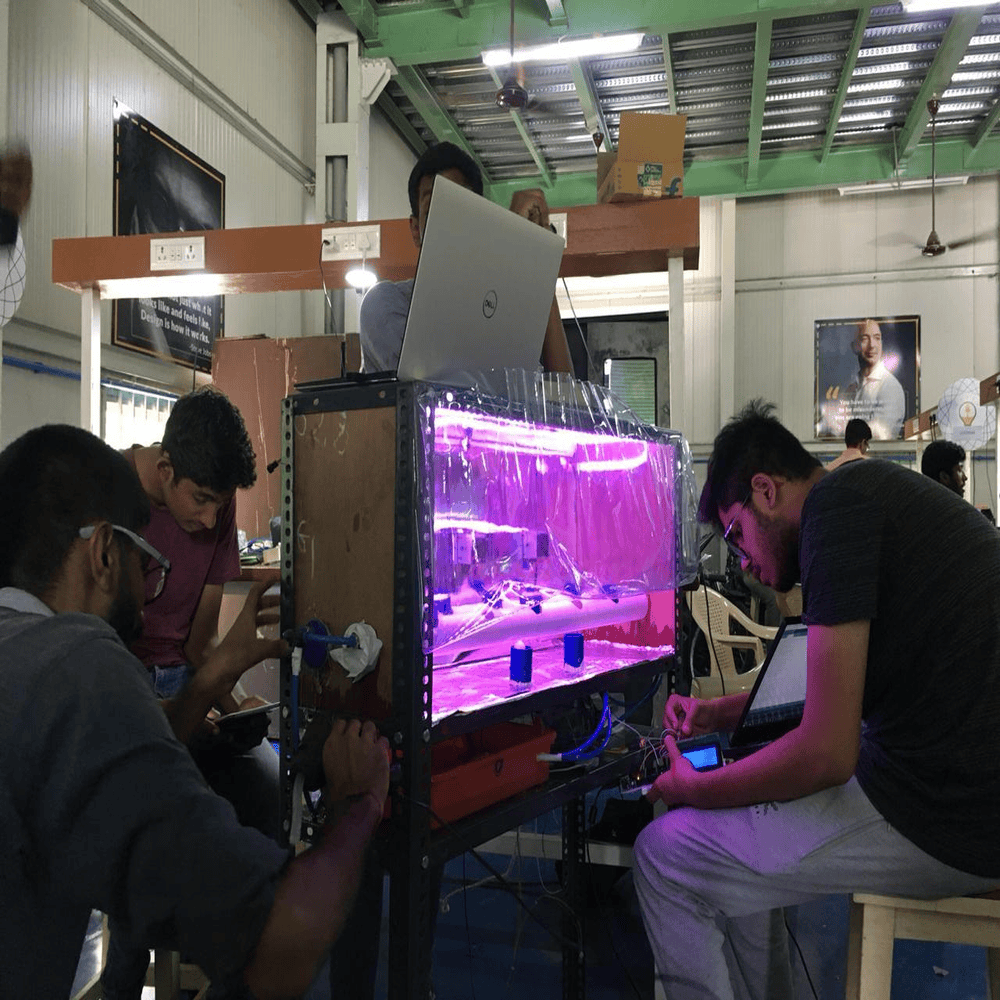

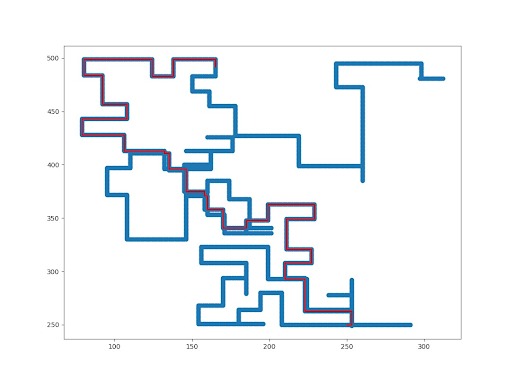

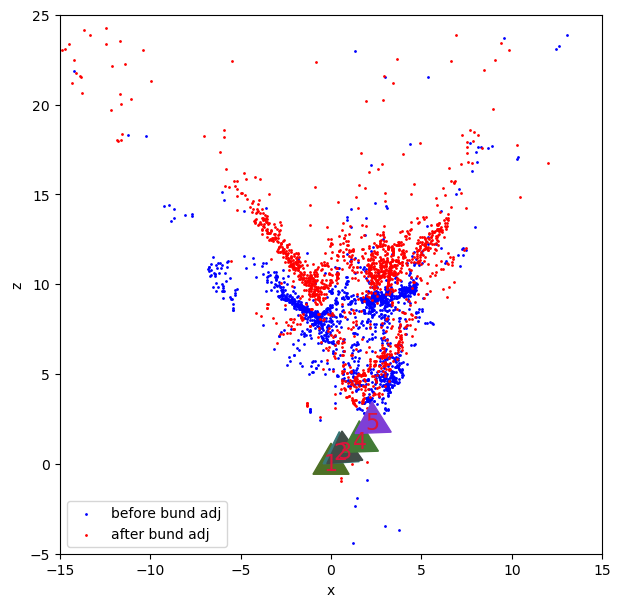

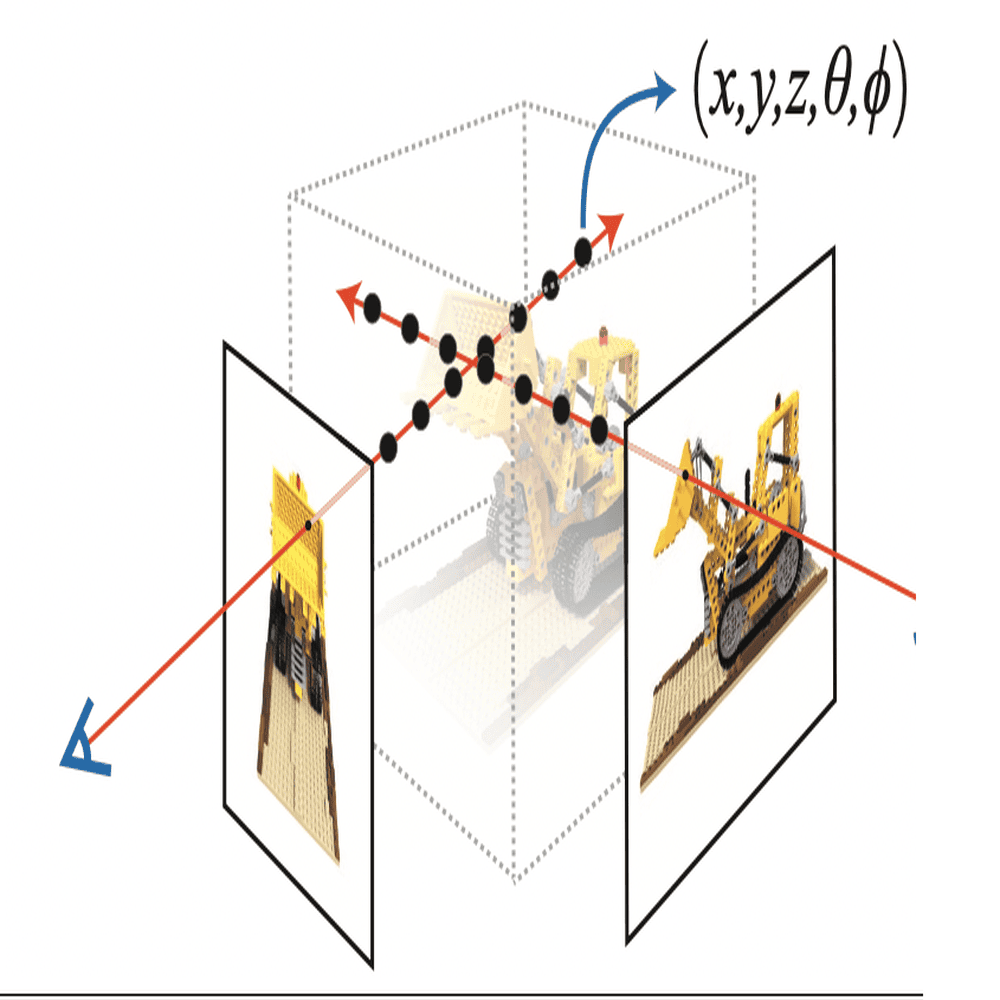

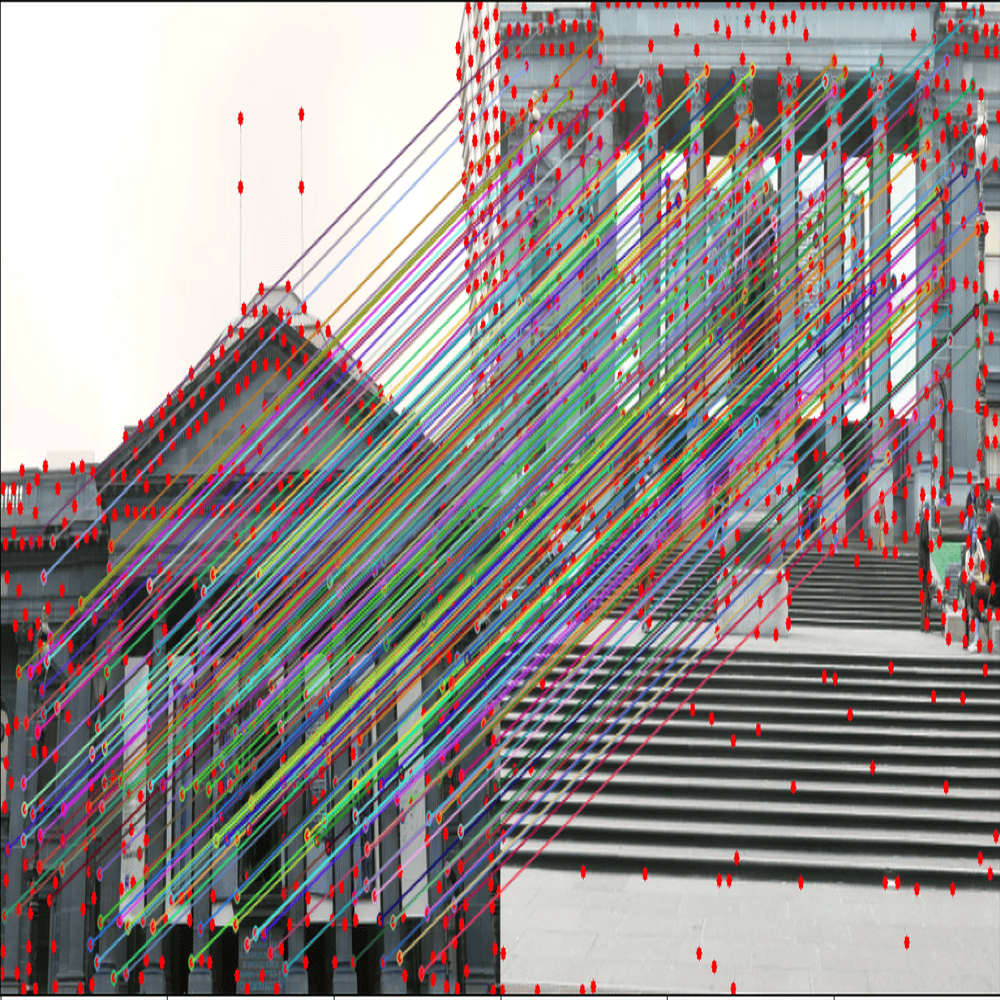

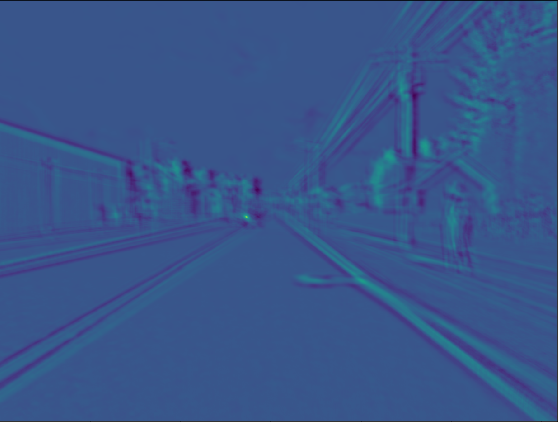

Robotics Engineer | Perception and Deep LearningI am a graduate of New York University (NYU), where I earned my Master’s degree in Robotics. My academic journey and research have been deeply rooted in the intersection of robotics, computer vision, and deep learning. I completed my undergraduate studies at SRM Institute of Science and Technology, where I honed my skills in robotics as a dedicated member of the SRM Team Robocon for three years. This experience provided a solid foundation for my professional career, including two years as a research engineer at Honda R&D. During my Master's at NYU, I was affiliated with the AI4CE Lab, where I focused on developing a Transformer-based point cloud registration pipeline. This project involved aligning sparse 3D point cloud data with 2D overhead image planes, enhancing indoor mapping in GPS-deprived environments. I had the privilege of working under the guidance of Professor Chen Feng. I also contributed to the Mapping NYC project, integrating and calibrating multiple sensors to generate 3D point cloud maps of NYC. This work played a key role in advancing autonomous vehicle testing and urban research, pushing the boundaries of real-time mapping technologies for smart city applications. With a strong background in robotics and a deep passion for machine learning, my goal is to continue contributing to the field by developing innovative solutions that tackle complex, real-world challenges. I am dedicated to advancing technologies that not only push the envelope in computer vision and deep learning but also make a tangible impact on society.

.png)

.gif)

.gif)

.jpeg)

.jpeg)